Anyone who’s tried to ship an AI agent to production knows the feeling: the model runs beautifully in the demo, then come sandboxing, checkpointing, credential management, retry logic for container failures — and suddenly three months are gone before any user sees anything.

That’s exactly what Anthropic is addressing with Claude Managed Agents, in public beta since April 8, 2026.

The Problem Nobody Likes to Name

Building a production-ready agent is not an AI problem. It’s an infrastructure problem.

An agent that handles real tasks needs: an isolated execution environment that stays secure even when the model runs its own code. Persistent state that survives container crashes. A credential store that lives outside the sandbox, so a prompt injection attack can’t immediately reach API keys. Tracing, so you know what broke when. And all of this for sessions that run not for seconds, but for hours.

According to Anthropic: “months of infrastructure work before you ship anything users see.” That’s not an exaggeration.

What Managed Agents Actually Delivers

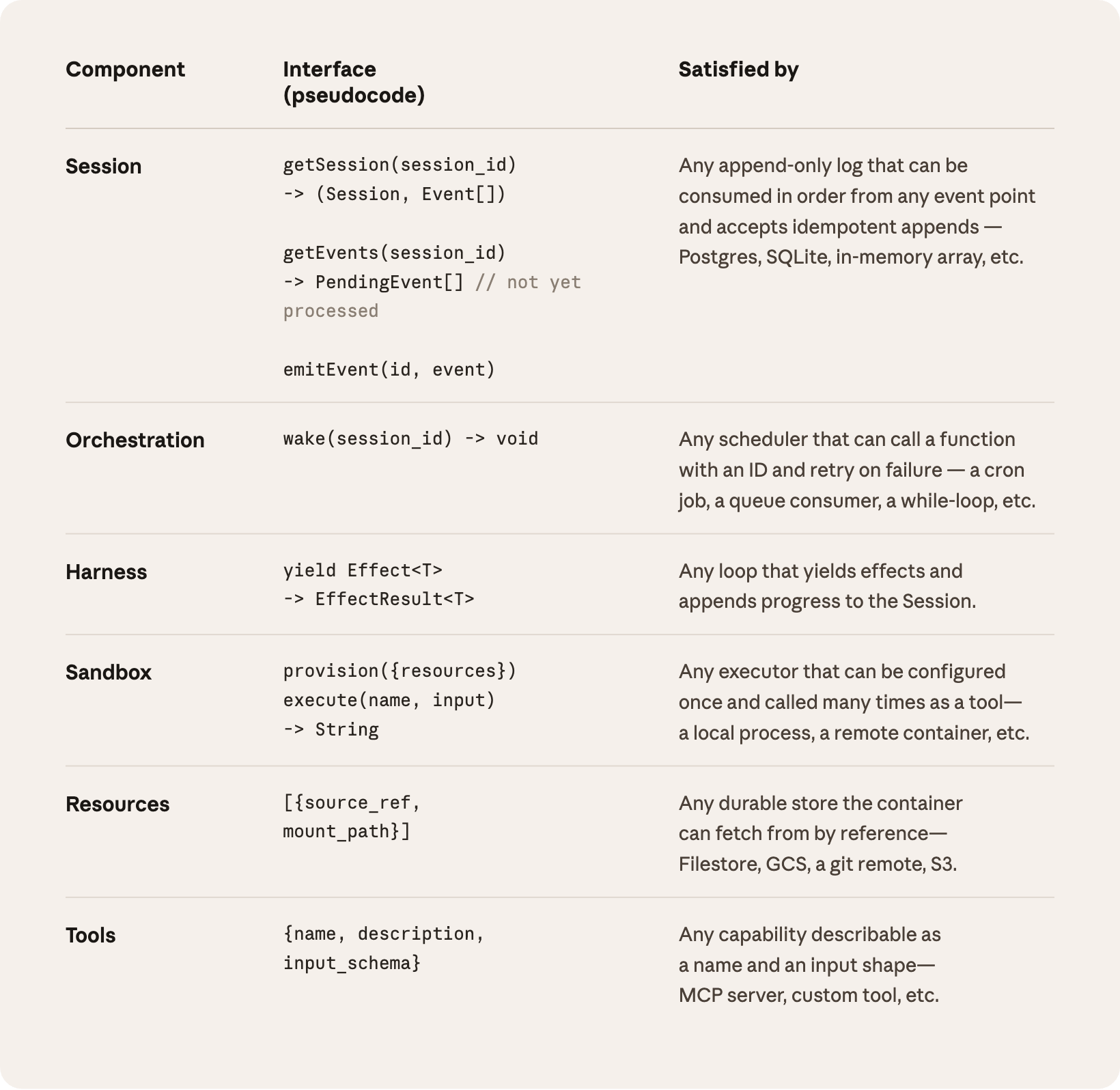

The core is a well-designed architecture of three decoupled components — and the decoupling is the real value.

Brain is Claude itself plus the harness — the orchestration logic that decides when to call which tool. Hands are ephemeral sandbox containers where generated code runs. Session is an append-only event log that lives outside all other components.

In practice: if a container crashes, the harness catches it as a tool-call error and boots a new one. If the harness itself crashes, a new instance can call wake(sessionId) to restore the last state from the session log and continue. No state is lost.

This isn’t marketing — it’s a classic pets-vs-cattle problem that Anthropic has solved cleanly. In earlier architectures, the container was a “pet”: a name, a history, not replaceable. Now it’s cattle: stateless, interchangeable, scalable.

Security Through Structure, Not Hope

Credential handling is the part many teams simply get wrong internally. When AI-generated code runs in the same container as the API keys that code will call, a successful prompt injection attack is enough to exfiltrate all credentials.

Managed Agents separates this structurally: for Git, the access token is injected at sandbox start and wired into the local Git remote — the agent can push and pull without ever seeing the token. For external services via MCP, OAuth tokens live in a separate vault; a proxy fetches them on demand and makes the actual API call. The harness never sees a credential.

That’s the difference between “we thought about it” and “we made it impossible.”

Session as External Context Management

An often-underestimated challenge: what happens when a task runs longer than Claude’s context window?

The usual answer is compression — a summary gets saved, old tokens get dropped. The problem: irreversible decisions about what to keep lead to errors. You can’t know in advance which earlier information a later step will need.

Managed Agents solves this through the session log itself. It’s not internal model state — it’s an external object. Claude can use getEvents() to selectively reload sections: the last N events, everything before a certain timestamp, a specific tool call and its surrounding context. That’s significantly more robust than any compressed summary.

Who’s Already Using It — and What They’re Getting

Several companies have Managed Agents in production already:

Notion has embedded Claude directly into workspaces — teams can delegate coding, presentations, and spreadsheets without leaving the app. Rakuten rolled out specialized agents for product, sales, marketing, and finance per deployment in one week, connected to Slack and Teams. Asana is building “AI Teammates” that work alongside humans within projects — CTO Amritansh Raghav says Managed Agents helped them ship advanced features faster. Sentry connected its debugging tool Seer with an agent that automatically writes patches and opens pull requests: from flagged bug to reviewable fix in one flow. Atlassian is working on letting developers delegate tasks directly from Jira.

These aren’t proof-of-concepts. These are production systems.

Pricing and What’s Still in Preview

Billing is usage-based: standard Claude API prices for tokens, plus $0.08 per active session-hour (idle time — waiting for user input or tool responses — doesn’t count). Web searches cost $10 per 1,000 requests.

For most workloads that’s cheaper than running your own infrastructure once you factor in engineering time.

Still in Research Preview: multi-agent orchestration (one agent spawning and coordinating others), extended memory tools, and self-evaluation (an agent iterates until it hits a defined outcome).

What This Means for Your Team

If you’re building or planning an agent right now — the question is no longer “can we do this?” It’s “do we want to own the infrastructure ourselves?”

For teams that want to ship fast, Managed Agents is a clear win. The Brain/Hands/Session decoupling is solidly designed, the security boundaries are structural rather than just conventional. And median TTFT (time to first response token) dropped 60% through the decoupling according to Anthropic — p95 by more than 90%.

For teams with very specific compliance requirements or a desire to control all infrastructure themselves: the Brain (Claude and the harness) still runs on Anthropic infrastructure. Managed Agents does support connections to your own VPC for the Hands — but that should be on your vendor assessment checklist.

Our take: this is one of the most well-thought-out infrastructure architectures we’ve seen in the agentic AI space. Not an accidental product — the result of real production experience with Claude Code and internal agent deployments.

If you want to evaluate Managed Agents for your stack or need support building secure agentic workflows — get in touch. We’ve been building these systems from day one.